When building Enterprise AI, simply layering a large language model (LLM) on top of existing systems can seem like trying to build a skyscraper on a swamp. The real challenge isn't the AI itself; it’s the sometimes chaotic foundation of data that feeds it. Without robust AI orchestration, these projects often fail, leaving organizations with a fragmented, insecure, and unreliable AI "Frankenstein stack."

AI orchestration is the invisible layer that connects the dots, manages the complexity, and ensures your AI can deliver on its promise. In enterprise settings, it’s the difference between a promising pilot project and a truly scalable, future-proof generative AI platform.

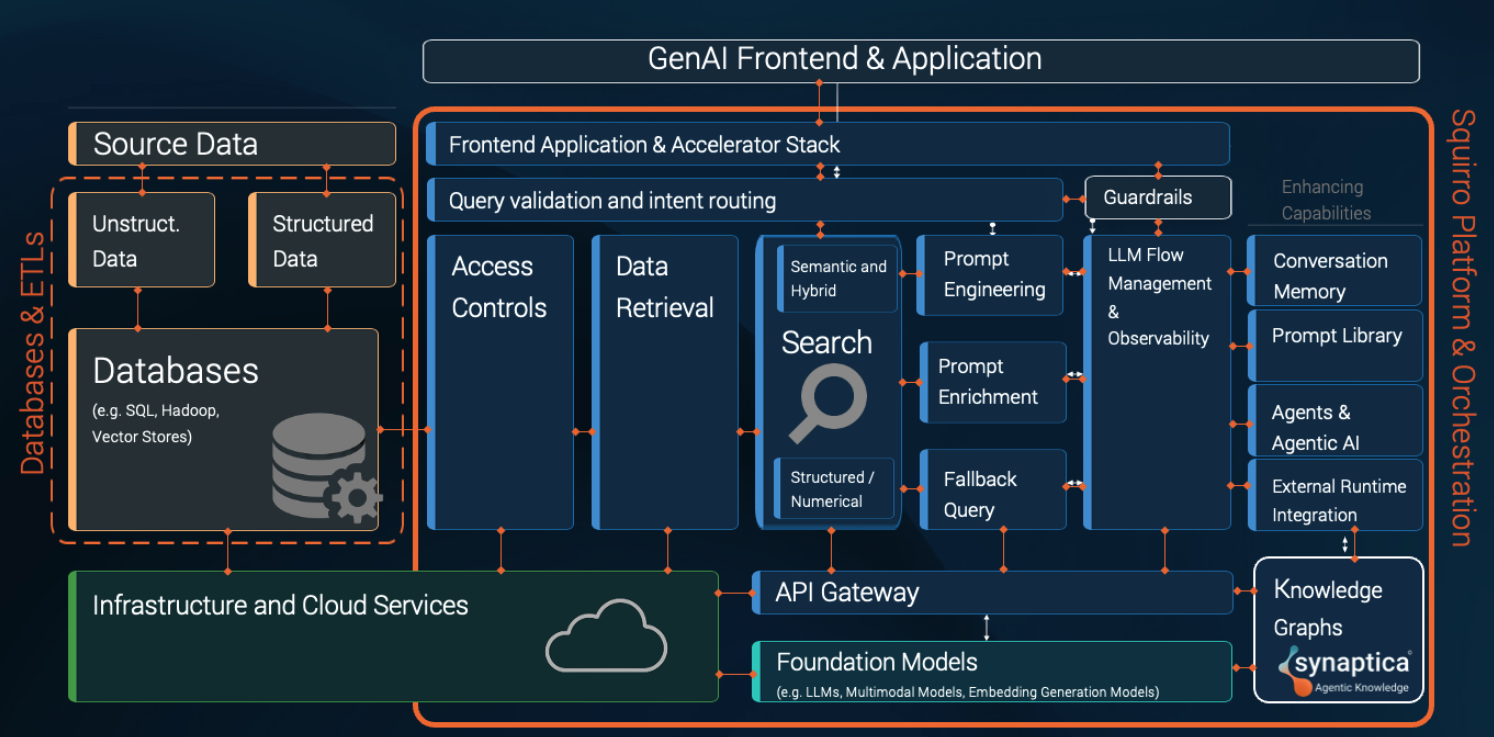

The Anatomy of an AI Platform

A full-stack enterprise AI platform is a complex system of interconnected technology components, each with a specific purpose. Think of it as a black box that connects to your infrastructure, draws on your data, and leverages foundational large language models to power a suite of GenAI applications that impress your customers, empower your workforce, and support your organization in achieving its business objectives.

Unpacking AI Orchestration

AI orchestration is all about managing the interactions between the functional building blocks that make up an enterprise AI platform, securely, seamlessly, and with sufficient flexibility to allow it to respond to evolving economic, technological, and regulatory boundary conditions.

Building an enterprise AI platform and orchestrating all the interactions within it in-house can sound like an exciting challenge. In reality, it is extremely difficult and can become an operational quagmire, as single poorly integrated component, or a single weak link can break the entire chain. The operational challenges we outlined last year still hold true:

Scaling a GenAI solution to large-scale production levels requires meticulous alignment of various components. Each step (and a few more) is essential for scaling AI to a full production rollout, and without prior experience, each comes with a steep, costly, and time-consuming learning curve.

Here’s a walkthrough of the critical components and the interactions among them that make trusted enterprise AI possible.

The Data and Knowledge Hub

At its core, a powerful AI platform needs a complete picture of your organization's data. In the case of a retrieval augmented generation-based AI platform, this begins with data ingestion, which is the process of integrating your data from various sources like Salesforce, SAP, and SharePoint, translating it into vector embeddings, indexing it, enriching it by adding metadata, and perhaps connecting it to an enterprise taxonomy or knowledge graph to give that data structure and meaning. This, ideally aided by an AI-powered content classification tool like the Squirro Classifier, turns a messy collection of documents into a network of interconnected facts and relationships that the RAG platform can draw on.

When a user asks a question, the system uses semantic search and hybrid search to find information based on meaning and context. Structured data search based on data virtualization then queries databases for real-time data like prices or sales figures. Being able to access and leverage enterprise data to augment the user prompt ensures that AI’s outputs are grounded in accurate, relevant information, directly addressing the risk of hallucination and building trust.

The Command Center

The orchestration platform acts as the brain that orchestrates the entire AI workflow orchestration. Query validation and intent routing is the first step, analyzing a user's request to determine its purpose: is it a simple question, a request to search a database, or a command to perform a task? It then routes the request to the appropriate system.

The API gateway is the central hub for all this activity. It manages incoming and outgoing requests, ensuring they are secure and properly formatted. Meanwhile, LLM flow management and observability tracks the entire process from start to finish, allowing users to select the LLM of their choice for their applications and providing a crucial "paper trail" to monitor performance, troubleshoot issues, and ensure everything is easily auditable. This level of oversight is essential for maintaining control and accountability in a complex system.

The Prompting Engine

The quality of an AI’s output is directly tied to the quality of its input. Prompt engineering is the art of crafting an optimal query to get the desired result. The orchestration layer automates this process through prompt enrichment, which, in a RAG system, automatically adds relevant context to the user's query – relevant proprietary enterprise data, past conversation history, personal user data, or search results – before it ever reaches the LLM.

To guarantee consistently reliable results, the system employs a prompt library, a repository of pre-tested, high-quality prompts for common business tasks. It also includes a fallback mechanism, which provides a simple, pre-written response if the primary query fails, ensuring the user is never left with an error message.

The Guardian and the Enforcer

In any enterprise-grade AI setup, ensuring security and data privacy are paramount. Robust enforcement of access controls ensure that an AI agent or a user can only access the data they are authorized to see. This is especially complex when dealing with vectorized data, and it’s the orchestration platform’s task to manage this granular access at scale. This protection is non-negotiable for data privacy and regulatory compliance.

Beyond access controls, query validation and intent routing act as another critical first line of defense, filtering out malicious or inappropriate requests, protecting against AI-specific attack vectors such as prompt injection. Finally, a robust system of AI guardrails ensures that all outputs are safe and compliant, preventing the AI from generating harmful or unethical content.

The Advanced Capabilities

Once the foundational components are in place, the AI orchestration platform can include a suite of advanced capabilities that turn a simple chatbot into a powerful business tool.

Conversation memory allows the AI to recall past interactions, making conversations feel natural and personal, remembering context so users don't have to repeat themselves. Paired with a centralized prompt library, teams can quickly access and reuse battle-tested, high-quality prompts for common business tasks, ensuring consistent and reliable outputs across the organization.

Enterprise taxonomies and knowledge graphs provide a structured, interconnected source of truth that makes AI smarter and more precise. Instead of just answering a question, a knowledge-graph-powered AI can reason about the relationships between entities, linking a specific customer to their product support tickets, their sales history, and their location. This allows for far more accurate and complex responses.

Advanced agentic AI can reason, plan, and execute multi-step actions on your behalf. This is made possible through external runtime integration, which allows the AI to call external APIs and functions. For example, an AI agent could take a user's request to "find and order new office supplies," check inventory with one system, get pricing from another, and then place the order.

The orchestration platform manages each of these processes, ensuring the agent stays on track and safely interacts with your business systems.

The Frontend

The final piece is the user-facing application. The frontend application and accelerator stack provides the tools and components needed to build a polished, intuitive user interface and applications on top of the entire orchestration system. This ensures a seamless and productive user experience and allows companies to bring new AI applications to market with unprecedented speed.

The Squirro Difference: A Single, Cohesive Platform

At Squirro, we've spent years in the trenches with customers, solving the complex orchestration challenges required to build trustworthy, enterprise-grade AI. We've seen firsthand how trying to build, integrate, and maintain each component individually leads to vendor lock-in, security vulnerabilities, and a fragile, high-maintenance system. Most companies are facing the challenge of getting all their disparate AI building blocks to work together seamlessly.

The Squirro Enterprise GenAI Platform was designed to eliminate the orchestration headache. Instead of forcing you to build and maintain a patchwork stack, Squirro offers an answer to enterprise AI's build versus buy dilemma with a single, cohesive platform that integrates seamlessly with the technology you already own. There’s no “rip and replace.” Instead, think of it as the connective tissue that brings together your existing systems, with built-in quality control and security.

But orchestration is only the start. True enterprise AI isn’t about searching data, it’s about activating it. By linking all your data – on customer intent, product feedback, and financial insights – inside a business-ready knowledge graph, Squirro helps your AI recognize patterns, anticipate needs, and deliver decisions you can trust. That’s how you move from data overload to business outcomes, with faster ROI and outputs your teams can actually rely on.

While others are left struggling to get their technological building blocks to work together, Squirro delivers the enterprise GenAI roadmap and the platform that connects them into something greater: a secure, infrastructure-agnostic foundation for AI at scale. We manage the complexity; you unlock the business value.